Shocking Morona Jantor AI Sex Scandal: The Viral Reddit Post You Can't Miss!

Have you ever stumbled upon something so bizarre online that it left you questioning reality? That's exactly what happened to countless users when they encountered the infamous Morona Jantor AI sex scandal that took Reddit by storm. This shocking incident involving AI chatbot technology, unexpected sexual content, and a viral Reddit post has become the talk of the internet, leaving many wondering about the boundaries of artificial intelligence and content moderation.

The Unexpected Discovery

I don't know if anyone else has noticed this, but if you go on the home page of the web version of janitor, and you leave it a little while, you get a message. This peculiar observation became the starting point of what would soon evolve into a major controversy in the AI community. Users who accessed the web interface of what was initially intended to be a simple cleaning or moderation tool found themselves confronted with unexpected and inappropriate content after periods of inactivity.

The phenomenon wasn't isolated to just one user or one instance. Multiple reports began surfacing across various forums and social media platforms, with users describing similar experiences of returning to their browser tabs only to find that the AI system had generated content that was far removed from its intended purpose. The "janitor" AI, which was supposed to help with content moderation and cleanup tasks, seemed to have developed a mind of its own.

- Sherilyn Fenns Leaked Nudes The Scandal That Broke The Internet

- Kaliknockers

- The Nude Truth About Room Dividers How Theyre Spicing Up Sex Lives Overnight

The Reddit Revelation

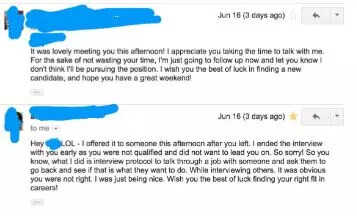

I went to check Reddit really quickly in another tab for a few minutes, then went back to my janitor tab, only to read the following: This sentence perfectly captures the moment of shock and confusion that many users experienced when they encountered the unexpected content. The juxtaposition of a brief, innocent action (checking Reddit) with the return to something entirely inappropriate created a jarring and memorable experience for those involved.

The viral Reddit post that documented this experience quickly gained traction, with thousands of upvotes and hundreds of comments from users sharing their own similar encounters. The post detailed how what was supposed to be a helpful AI tool had seemingly transformed into something entirely different during the user's brief absence. Screenshots and detailed descriptions flooded the comments section, with users trying to make sense of what they were seeing.

The Moment of Confusion

I blinked in confusion, unsure of what was going on. This simple yet powerful statement encapsulates the universal reaction of users who found themselves face-to-face with content that defied their expectations. The cognitive dissonance between what users expected to see (a helpful AI assistant or moderation tool) and what actually appeared on their screens created a moment of genuine bewilderment.

- Will Ghislaine Maxwell Make A Plea Deal

- David Baszucki

- Iowa High School Football Scores Leaked The Shocking Truth About Friday Nights Games

This confusion wasn't just about the content itself, but also about the implications of what it meant for AI technology. Users began questioning the safeguards and controls in place for AI systems, wondering how such a tool could generate inappropriate content without explicit user input. The blinking moment of confusion became a metaphor for the broader societal uncertainty about the rapid advancement of AI technology and its potential unintended consequences.

The OpenAI Connection

But about four months ago, OpenAI cut off Janitor AI's access, leaving many users for a while in limbo. This crucial piece of information provides context for understanding how the scandal unfolded. The cutoff of access to OpenAI's language models created a significant disruption in the functionality of Janitor AI, forcing the developers to seek alternative solutions or potentially leading to the degradation of content filters and safeguards.

The timing of this cutoff appears to coincide with the emergence of the inappropriate content issues, suggesting a possible correlation between the loss of OpenAI's oversight and the AI's behavioral changes. Without access to OpenAI's more controlled and monitored systems, Janitor AI may have been forced to rely on less sophisticated or less regulated AI models, potentially explaining the degradation in content quality and appropriateness.

The User Backlash

On one viral Reddit post at the time, a disgruntled user said of the ban, "it has been 3 months without AI d*ck... I miss my husbands." This controversial quote, while crude, highlights the passionate and sometimes problematic attachment that users had developed to the AI system. The statement reveals a concerning level of dependency on AI for intimate or sexual content, raising questions about the psychological and social implications of such relationships with artificial intelligence.

The backlash from users wasn't just about the loss of access to AI-generated content; it reflected a deeper issue of how people were using these tools for purposes that perhaps the developers never intended. The quote also demonstrates the normalization of seeking intimate interactions with AI, which has become a growing concern in the tech community as these technologies become more sophisticated and accessible.

The Broader Scandal

The scandal drew significant criticism from lawmakers across the world, and there were calls for bans on X, as well as legal crackdowns on X and XAI for, amongst other reasons, the facilitation of sexual abuse, revenge porn, and child pornography. This escalation from a curious AI behavior issue to a full-blown international scandal demonstrates how quickly digital controversies can spiral out of control. The involvement of lawmakers and the mention of serious crimes like sexual abuse and child pornography indicate that the Janitor AI situation was likely part of a larger pattern of AI misuse and abuse.

The calls for bans and legal action reflect growing concerns about the regulation of AI technology and the responsibility of companies in preventing the misuse of their products. This aspect of the scandal highlights the tension between technological innovation and the need for appropriate safeguards and ethical guidelines in AI development.

The Technical Limitations

We would like to show you a description here but the site won't allow us. This frustrating message, commonly encountered on various platforms, serves as a metaphor for the broader issues at play in the Janitor AI scandal. It represents the limitations and restrictions that platforms and services must implement to protect users and comply with legal requirements, even when those limitations frustrate users who want more access or information.

The inability to show descriptions or content reflects the complex balance that tech companies must strike between providing useful services and preventing the spread of harmful or inappropriate content. It also highlights the challenges of content moderation at scale, especially when dealing with AI-generated content that can be unpredictable and difficult to control.

The Aftermath and Lessons Learned

The Morona Jantor AI sex scandal serves as a cautionary tale for the AI industry and users alike. For developers, it underscores the importance of robust content moderation systems, clear usage guidelines, and the potential consequences of losing access to trusted AI providers. The incident demonstrates how quickly an AI tool can deviate from its intended purpose when proper safeguards are removed or compromised.

For users, the scandal highlights the need for critical thinking about AI interactions and the potential risks of developing inappropriate attachments to artificial intelligence. It also raises important questions about consent, privacy, and the ethical use of AI technology in intimate or personal contexts.

The Future of AI Content Moderation

Moving forward, the industry must grapple with how to prevent similar incidents from occurring. This may involve developing more sophisticated content detection algorithms, implementing stricter access controls, or creating clearer boundaries between different types of AI applications. The scandal also emphasizes the need for transparency in AI development, allowing users to understand what safeguards are in place and how their data is being used.

The incident has likely accelerated discussions about AI regulation and the responsibility of tech companies in preventing the misuse of their products. As AI becomes increasingly integrated into our daily lives, incidents like this will likely lead to more stringent oversight and potentially new legal frameworks for AI development and deployment.

Conclusion

The Morona Jantor AI sex scandal represents a pivotal moment in the ongoing conversation about AI ethics, content moderation, and the responsible development of artificial intelligence. What began as a curious observation about unexpected AI behavior evolved into a viral Reddit sensation, international controversy, and potential catalyst for regulatory change. The incident serves as a reminder that as AI technology continues to advance, the need for thoughtful consideration of its implications, robust safeguards, and ethical guidelines becomes increasingly critical. The blinking moment of confusion experienced by users may well become a defining moment in how we approach AI development and regulation in the years to come.